Julien Bayle: Uncertainty, Fragility, Max, and Live

As technology advances, different aesthetic media seem to blur together. Using modern computers and control interfaces, plus powerful tools like Ableton Live, Push, and Max, Julien Bayle composes music, creates visual art, and performs both – not to mention his educational activity as an Ableton Certified Trainer and author of multiple books on music and audio programming. Coming out of a number of diverse projects (and working on a series of new ones), Julien shared some perspectives with us on audio-visual performance, why he originally came to Live, and some critical tips for Live users of all levels.

Some of Julien’s visual work.

Much of your work features minimalist sounds and designs. How do you create most of your sounds?

I’m using many methods to create sounds. Almost all are digital-based, using Max 6. I’m also using Reaktor a lot. Then, I’m triggering everything in real-time. I don’t use a lot of samples on stage – it’s almost always sequencers triggering synthesized sounds. I like to have control over each part of my live set, from the sequence to the sound itself. I’m often using Reaktor sequencers that I’ve modified. I’m also designing my own sequencers using Max for Live, of course.

I like basic sound synthesis concepts – granular, additive, subtractive, wavetable. I want to keep my sound bits very minimal and, as I see it, you cannot keep the whole thing minimal with complex generative processes.

I also like to use algorithms to generate sound evolutions. I often start with a sequence, and I program more or less deterministic algorithms for evolving the sequence. For instance, you can imagine a filter triggered by an evolving algorithm instead of an LFO or my hand. It can be synced or not, but it is always weird [laughs].

How do you have your visuals interacting with your sounds?

I have always felt that sounds and visuals are two sides of the same creative matter. Actually, it’s similar to synesthesia probably, even if I’m not a proper physiological synesthetic person. I feel air vibrations as colors, sound frequencies as brightness. I cannot imagine my music/sound without a proper visual artwork.

With my recent collaboration with Mechanic & Acoustic Laboratory of CNRS (French research institute), I improved my sound analysis concepts. Actually, Patrick Sanchez, a researcher engineer and sound expert, helped me to improve my analysis patches set and tools with his high level of expertise.

Julien’s waveform-based visuals.

I often put the amplitude/envelope of audio channels in matrices. It provides a nice way to make waveform graphs and I can play with that by downsampling the signal, and using these graphic points to move other parameters.

Beside audio analysis, I often use MIDI data from my sequencers for live visuals. Sequencers are rich sources of data. It can even be much lighter for your CPU than pure real-time sound analysis.

For instance, say I’m triggering a long pad sound. As soon as it starts (i.e a MIDI “note on” message is popped out from the clip in my Live set), the MIDI instrument is triggered and produces a sound, but I’m also using another “helper” track to grab this MIDI to route it outside of Live into Max, where it triggers a movie to play, or starts a movement inside my openGL-based scene. Then, as soon as the long pad sound is ending, a MIDI “note off” message is sent, and grabbed by the Max 6 system. This ends the movie playing or stops the movement. This is another way to use MIDI and to trigger and alter visuals with synced audio

Excerpt from a live performance of “Disrupt!on”

Could you walk me through the “Disrupt!on” project?

This project is about the disruption of signals, and was curated by François Larini for the Nouveau Musée National de Monaco. “Disrupt!on” is also the name of the related installation I exhibited in London in March and April at The Centre of Attention.

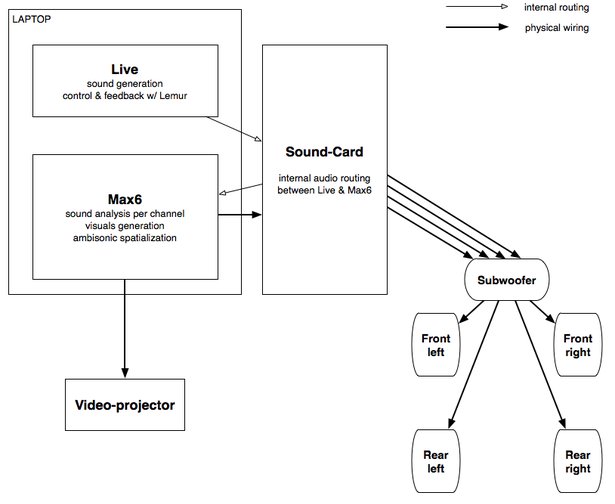

The experimental music I designed for the museum in Monaco was prepared together with visuals. I used four audio channels and a subwoofer for this performance. I used a Genelec system splitting frequency directly within the subwoofer. But I needed to route the channels from my set correctly. I used a very easy and basic routing method, which is summarized with this schematic:

Schematic for routing in “Disrupt!on”

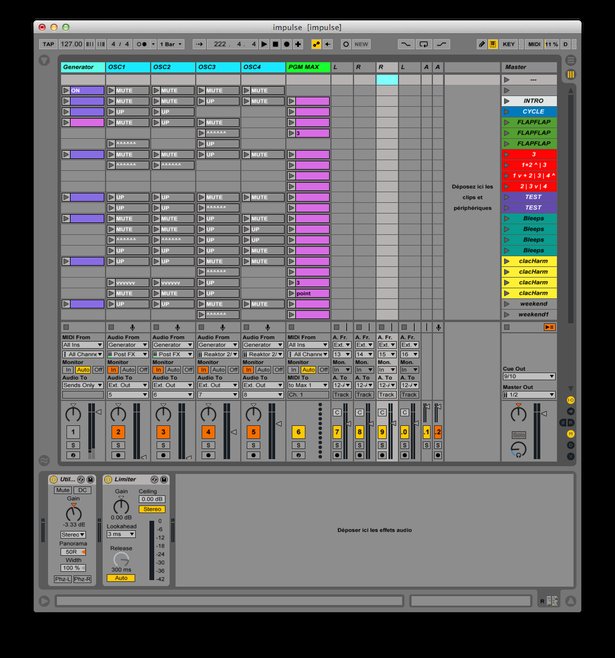

Live handles all sound generation. I’m using four tracks, one for each channel. By using source routing, I grab each of the four channels from my Reaktor-based generator patch. Each channel is sent to a different mono channel of my sound card with internal routing and loopback capabilities (I’m currently using the RME Fireface UC). Using the mixing software TotalMix, I then have an easy way to route each channel into my Max patch. This virtual routing is handled by the sound card hardware, which lightens the load on my laptop’s processor.

Julien’s Live Set for “Disrupt!on”

Then, my Max patch analyzes each audio channel it receives from the sound card for visuals generation. Basically, I’m plotting each envelope (and sometimes sound frequency bands) on an X/Y graph. As with much of my work, “Disrupt!on” live is really minimalistic, even in its design.

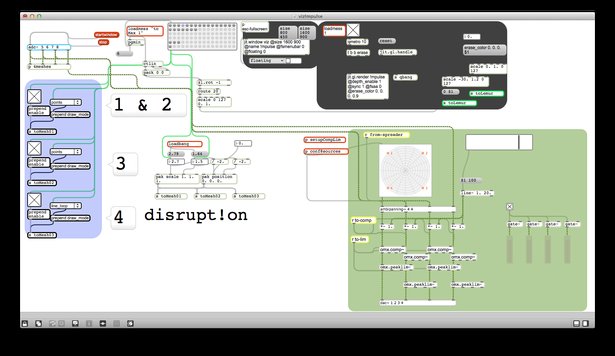

Julien’s Max patch for “Disrupt!on”

I’m using Ambisonic externals by Institute for Computer Music and Sound Technology.

It provides a nice way to grab my four channels and spatialize them according to the physical placement of my speakers and to some other parameters I can dynamically set in real-time. In this Disrupt!on case, I used a special and weird way to spatialize my sounds: the visualization of the sound themselves. Let’s dig into that part a bit deeper, because it is one of the core concepts of this project.

I’m generating four audio signals. I’m placing them on the screen according to specific visual rules I can change on stage. Then the different positions of the plots are reinjected into the system for spatializing the sound themselves. It produces more fragility and uncertainty and this is what I wanted to play with. So there is a direct correspondence between the visuals on the screen and the placement of the sounds in the room.

Your blog is a source of much inspiration, and you recently wrote about a “really weird” experience in an anechoic chamber. For someone whose work revolves around sound, what did you discover in the absence of any?

I especially felt the absence of echoes of my own sound. While in the chamber, I was curious to speak. Maybe it was more reassuring but especially, I was really fascinated by the effect (or the absence of effect) of my own sounds against the external world.

Ryoji Ikeda’s db piece was designed for an anechoic chamber (and involved a panic button, actually). I’d really be interested in making some further experiments in this environment and designing an installation, too. I’d also involve lights, more than just displaying visuals through a video projector. It could also be a nice start for collaborating with Philips Lighting with whom I started a discussion about some future work.

If you could offer just one short tip to anyone making music with Live, what would it be?

Here’s a basic one, but a nice one to use:

- Take a sample and drag’n’drop it inside an audio track. Live will create an audio clip.

- Use the Slice to MIDI option by right-clicking over the audio clip. Live will then create a new MIDI track with a MIDI clip, triggering a Drum Rack already filled and set up with slices of your sample.

- Now, place the Random MIDI effect before the Drum Rack that contains your slices. Play with the “chance” parameter and tweak the effect.

It will completely deconstruct the reading of your sliced sample, playing some slices before some others, and generally glitching the whole thing. By adding a “scale” MIDI effect, you can also constrain the randomness a bit more and keep the alteration inside a specific range. I’d also add a beat repeat, remove the triplets by checking the “No Triplets” option, then play with the grid size and the offset.

These basic elements can give you ideas to tweak beats and produce a lot of variation with only one initial sample. And all of this is absolutely non-destructive. This is also another example of what we can do with MIDI, even with audio at the beginning of the process.

Julien performs with Live and Push

Which other artists inspire your music? Your visuals?

I’m listening to Autechre and the Raster-Noton label very often; Lustmord, Geir Janssen [Biosphere] a lot too. Their deepest ambient tracks are really interesting when I’m coding and programming complex structures. Sometimes I listen to absolutely chaotic IDM tracks sometimes when I’m patching. This can sound crazy, because it is not relaxing, but I often need that at these moments.

I’m really interested in the works and approaches of architects and designers. I think this is my most inspiring source for my own creation, beyond other musicians.

One artist I really like to read, listen and watch is Carsten Nicolai (aka Alva Noto). Few people in the music field know him as a sculptor, a designer, or an architect, and few people in these other fields know him as a experimental techno electronic musician. I like to make bridges between genres and fields, and I really like that he has his own way of creating.