Download the Live Set of Jinku’s New Track “Barda”

Nairobi has long been a center of musical diversity and experimentation – a place where global influences meet regional traditions. The fresh energies set free by these encounters inspire artist collectives like EA Wave, who push the boundaries of modern African music.

Jacob Soloman aka Jinku has grown up immersed in Nairobi’s thriving arts scene. As the founder of EA Wave, he’s been steadily refining his fusion of afro house, R&B, and African percussion into a genre he defines as tribal downtempo.

In last month’s XLR8R+ edition, Jinku released his new track “Barda”. We caught up with him from his adopted home in Stockholm for a chat about his techniques and creative strategies. Plus he shared a download of his Live Set for a direct look into his process.

Download the Live Set to Jinku’s track “Barda” here*

*Requires a Live 11 Suite license or the free trial.

Please note: this Live Set and included samples are for educational use only and cannot be used for commercial purposes.

Jinku, thanks for taking the time to talk to us about your work. How did you find your way to music-making while growing up in Nairobi?

I actually wanted to be a painter or graphic designer. But my parents were having none of that. They said I needed to become a doctor, engineer, or business person. They were worried that artists were some of the poorest people in our country. Back then there were no cultural reference points in Nairobi. There were no famous people making bank as artists. So I went and did a communications degree. During university, I still managed to learn Photoshop and Illustrator. Then I started doing artwork for music producers. So actually I entered the music scene through graphic design.

What influenced your transition from graphic design into music-making?

There was a musician I worked with called Saint Evo. He was making afro house and that kind of stuff. After watching how he produced I would start throwing in random ideas. Eventually, I was given a copy of Live to try and my whole mindset about African music and African rhythms changed. From there I formed EA Wave and I’ve just been grinding at it ever since; trying to create these alternative sounds in Nairobi.

Are you still releasing music with EA Wave?

As the collective became more well known there was more pressure to release music together. But lately, we’ve become like the Avengers! So it’s like when The Incredible Hulk, Iron Man and Captain America all do their thing but then come together. That’s what I feel is happening with EA Wave. Everyone is having their own adventures. But when the time feels right, we’ll put something out collectively.

Where did you meet?

In Nairobi, if you wanted to find the fresh sounds in the city you’d go to SoundCloud. That's where we all found each other. I think we were known as the SoundCloud era. When we met in real life no one knew each other's actual names. Everybody was being called by their usernames!

How is the local music scene in Nairobi lately?

Well, I think COVID really did a number on it. But there have been some small incremental things, like Spotify setting up in Kenya in 2019. So now we are starting to get localized playlists. That's helping the burgeoning scene and the younger cats who’ve come after me.

From when I started to where it is now, I can definitely see people are more expressive in Nairobi. We have a really strong queer scene in the city right now. There's a singer called Bakhita who’s advocating for this community. It's really nice to see that thriving.

You’ve described yourself as a sponge of different influences. What kinds of music inspire you?

Right now I love ‘90s hip hop and 2000s R&B. The first time I was introduced to afro house it was mind-blowing to hear African percussion in such a western genre. What I’m now doing is taking that basis of afro house percussion and putting it into R&B and hip hop. I call it tribal downtempo.

WAVE TAPES 01 - Jinku

There are a lot of those percussive influences coming through in the Live Set you’ve shared. How did you come about naming the track “Barda”?

Barda is actually a musician who’s part of this organic downtempo movement. When I made it I was thinking of her sound and what would compliment it. I was actually trying to come up with another name, but it just felt like her.

When starting a project, are you more likely to pick up an instrument, or head straight to Live?

Recently I’ve been recording a lot of sketches on my phone, which I then drop into Live. Then I’ll use the tap tempo feature to match those sketches. A lot of my new songs have weird tempos like 91.83 or 80.47 BPM because I’m using my body’s actual internal rhythm to determine the speed.

What does your typical creative process look like in the studio?

I have two layers. The creation process and the mixing process. The creation process is just about throwing everything at the wall. It's about finding sounds, getting a basic groove, and then trying a lot of combinations of things with it. I will do that until I get bored, then I’ll close the project down. When I come back to it I’ll throw everything on the timeline. I’ll start soloing different things to see what works together and where relationships are forming between different parts. Then I’ll start using the mute button (0 on the keyboard) to deactivate clips and build a song. Once I have an arrangement it goes into mixing. Personally, I really enjoy the mixing stage because that's what makes the track.

“Sound is like a block of clay and EQ is the sculptor”

All of the tracks in your Barda project have been rendered as audio. Was that a creative decision?

That's just how I work. I always bounce everything down to audio. I just don't like MIDI for some reason. When I bounce to audio it feels like I’m committed. Also, I want to be able to move my projects across different computers without having to worry about plugins. Moving those big sound libraries is always difficult. So as soon as I put an idea down I freeze it immediately. It's a very conscious decision.

You’ve used a lot of EQ devices running in serial on many of your channels. What’s the thinking behind this?

Sound is like a block of clay and EQ is the sculptor, that's how I see it. I read in forums you’re not supposed to create too many notches with one EQ. They say it's more destructive than subtractive. So it's better to do small things with different EQs because it gives a much cleaner sound. It's like you’re dividing the load between a number of EQ plugins rather than having one do all of the processing.

Jinku uses multiple EQ devices in his signal chains to spread the processing load

Did you record real percussive instruments for this project, or did you work with samples?

It’s a mixture of different loops that I cut to get certain accents. I then processed some of them through devices like Beat Repeat and Granulator and bounced them to audio.

Was any warping required?

I actually don't warp what I play. I don't quantize my drums or use swing templates, because that’s like copying the swing of a certain drummer. The reason we might like J Dilla’s drumming, for example, is because he played his drums using his own internal swing. That's why, when I do my drum programming I prefer to just keep it as is. If it's a bit wrong I’d much rather do it again than quantize it. It keeps it more performative. This way it’s more like having a conversation with your computer rather than your computer dictating what you create.

When building up this percussive section, how did you make all of the individual layers work together rhythmically and sonically?

OK, one thing – always tune your drums to the key of the song. Then it’s about adding some aggressive EQ, panning, leveling, and good mixing etiquette. I also squashed everything with a Drum Buss.

Do you use a tuning device to determine the key of your drums or is it done by ear?

By ear. Sometimes I will solo a melodic element in the song and then just start tuning each percussion clip one by one. I’ll keep tuning by ear until it feels like they’re all related and part of the same universe. If all your drums are in the key of the song it feels like they’re lifting the melodic structure.

What effect is the College Dropout Max for Live device having on your percussion master buss?

It's basically like tape saturation. So it rolls off all that annoying high end and gives things a nice round low end.

Jinku uses the College Dropout Max for Live device to roll off unwanted low and high frequencies

Throughout the piece, there’s a Rhodes chord line that gives the track a dubby vibe. How did you process it?

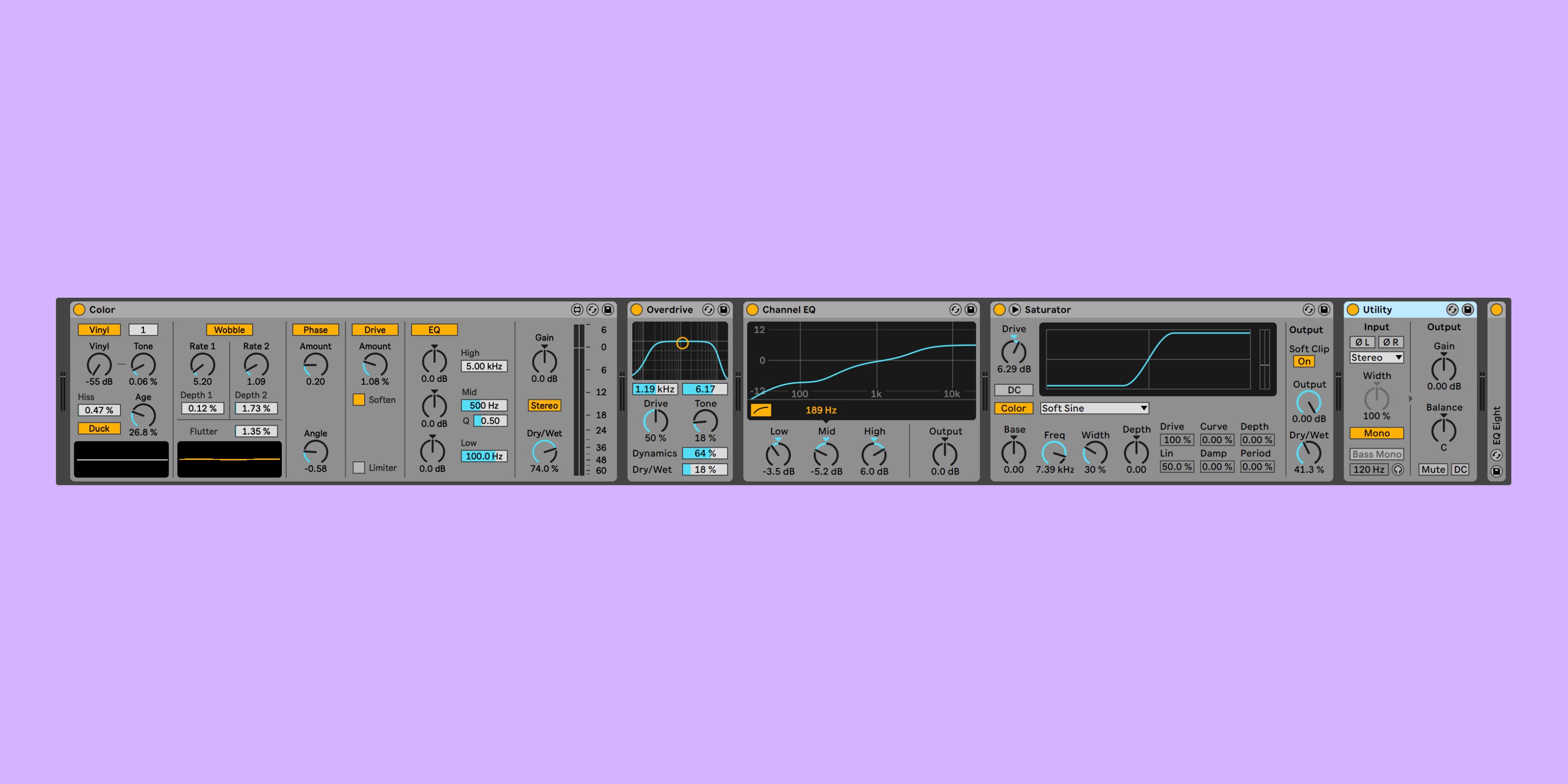

Those chords are from Arturia Analog Lab and I’ve added the Max audio effect Color. Color’s Phase gave the chord more depth while the Wobble made it veer in and out. There’s a bit of hiss generated by the Age parameter which gives the chord a vintage sound depending on how high you crank it.

Jinku uses the Color Max audio effect to add phase, wobble and hiss to his chords

You’ve created a big bridge section with trumpets. Were those taken from live recordings or did you use samples?

The trumpet is played by Samson Maiko. He’s an instrumentalist from back home in Nairobi.

You used a Max for Live Device called Track Stereo Pan a lot in this section. What's it doing?

This device enables true stereo panning. I use it as a correction tool when dealing with samples that are already panned in a certain way. If I want to use automation with Live’s own Stereo Pan mode, it can get tedious editing the left and right channels. This plugin allows me to draw automation more easily. It’s a time saver and keeps me in a flow state.

A lot of the width and space in this section seems to be coming from an Effect Rack containing Reverb, Spectral Blur, Delay, and Grain Delay devices. What was the thinking behind this effects chain?

I used Spectral Blur more like a reverb rather than a blur. Using its Halo mode with the Residual and Frequency parameters gave a really washed-out sound. When I mixed it with the dry signal it sounded like a big hall verb.

The Filter Delay is more for space. It was about pushing the delay into the stereo field more. I was able to EQ the left and right sides individually on this device, so it gave me more control over the delay signals.

Some of your trumpets have high-pitched crystallizer effects coming through. Which device did you use to create those?

I wanted to give the trumpets more high-end sparkle so I used Grain Delay with its pitch set to 12. At low volumes, this gives the trumpet a more high-frequency presence. It also helps with the stereo width.

Jinku uses an Effect Rack containing Reverb, Blur, Delay, and Grain Delay to create space, width, and high-frequency harmonics in his trumpets section

How did you build up the rhythmic melodies in your Arps section?

I have an Arturia Key Step Pro with an arp function on it which was triggering Arturia Analog Lab. I was playing with the swing, recording different takes and bouncing them down. Instead of playing the arpeggios to the grid, I was riding their speed. This helped them to catch the percussion in a certain way. It gave the feeling of both elements playing together. On Analog Lab I was also tweaking the filters, making them rise and fall to create lots of movement.

Where did the African vocals come from?

The vocals were from Splice. I really like how Splice has democratized music-making. You have to be more creative to stand out because these sounds are accessible to everyone. But in the past, I've recorded a lot of vocalists I found online through Twitter, or in Nairobi through word of mouth.

Lately, I’ve reached a point where I find it very hard to imagine a completed track without a vocal contribution. But that’s something I am trying to get out of now. I’m trying to remind myself you can make purely instrumental music and consider it finished.

One of your return channels is called Devil Loc. What’s happening here?

On this return channel, I’m squeezing the life out of the song with a number of devices like EQ Eight, Saturator, and Dynamic Tube. I grouped every channel in the project into a mix bus so that I could mix this return channel back in. This had the effect of warming up the mids basically.

What kinds of master effects have you added to the mix bus itself?

I’ve used Utility to mono the bass and added some mid-side EQ. Then I’ve used Final Glow which is an Effect Rack that has a ton of different things like saturation, overdrive, and compression. It works like a very aggressive compressor. But you’ll see the Macro Controls are only set to 1, so it's just tickling the signal chain.

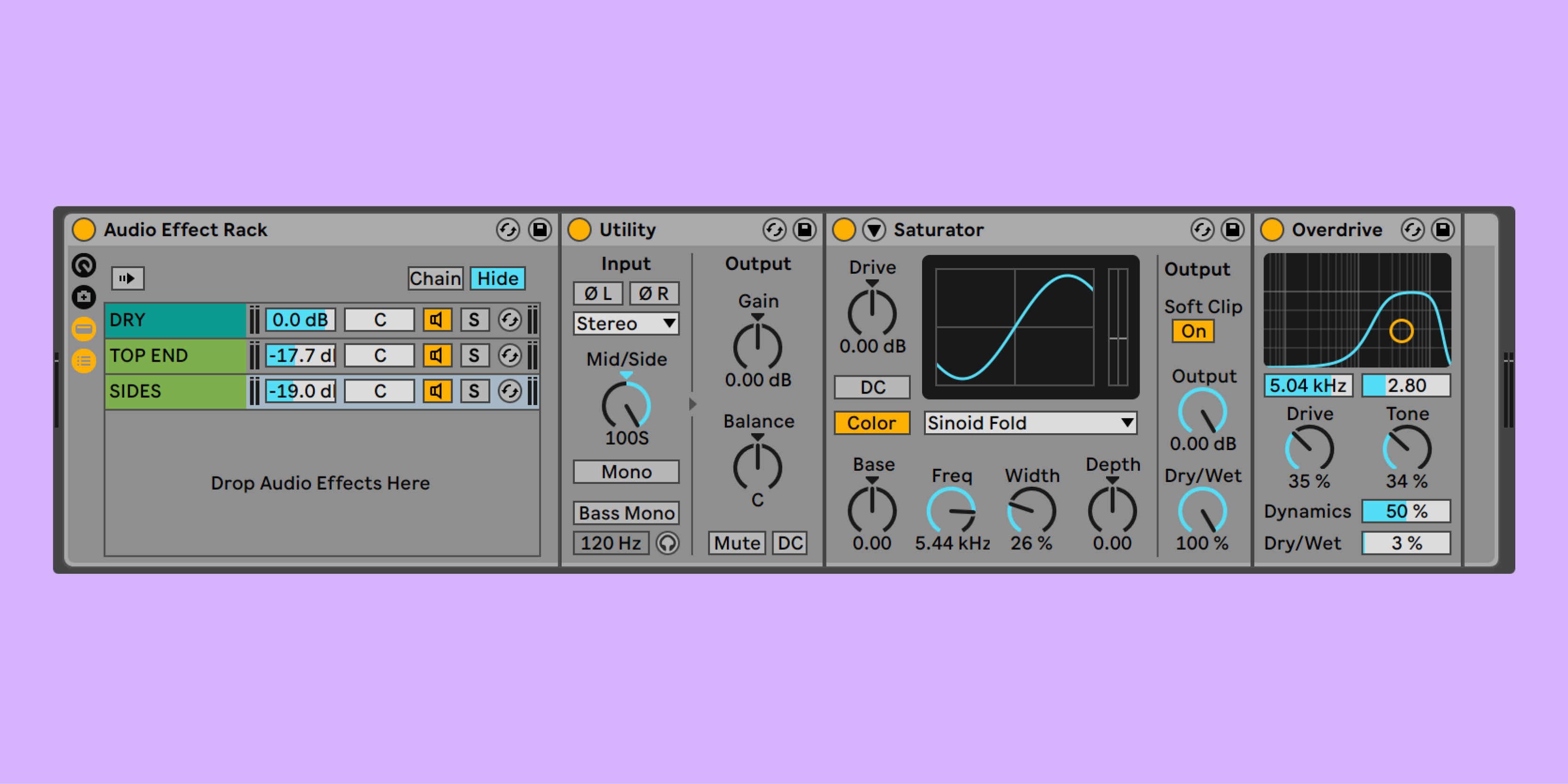

You’ll see another Effect Rack where I did some processing to the top end, to give it some sparkle. The first layer of the chain is left dry so that I can process the high frequencies in subsequent chains without affecting the whole signal. Then you will see I’ve high passed the signal and added Saturator.

In the third chain, I’ve toggled a Utility device to Mid/Side mode. This allowed me to add saturation and overdrive just to the sides.

Jinku uses an Effect Rack to process specific frequency bands on his mix bus channel

Would you typically leave all of this processing in place before sending the track to a mastering engineer?

Definitely. As you can see, this track is peaking at -6db. That's how I sent it off to be mastered.

This is also why I have a Utility device at the beginning and end of the master effects chain. If you keep all your channels low it’s much easier to gain stage and make sure all of the plugins are fed the right amount of amplitude where they sound best, and most optimal. Then you can use the Utility devices to trim the signal as needed.

Jinku, thanks for sharing so many insights into the production of your track. What music projects do you have coming up in 2022?

Thanks for the opportunity! So, in June I have an album coming out called Passenger 555 featuring a Kenyan singer called Karun. It’s a seven-track album of cosmic R&B. It’s really percussive and synthy with some heavenly vocals.

In July I have a tape coming out called Oasis Park. It's about my journey from Nairobi to my first winter in Sweden. It's an amalgamation of Kenyan artists and Swedish musicians coming together in proper tribal downtempo fashion.

Keep up with Jinku on Soundcloud, Facebook and Instagram.

Text and interview by Joseph Joyce.

A version of this article appeared on XLR8R+.